Yeah, I hate Facebook, and I got suckered into it as a request for my work with a non-profit. To be honest, I did not get much from Fuck-You-Book in that regard. My non-profit even purchased Google and Facebook ads, and that brought my work zilch.

So, engaging in robust, funny, sarcastic, profane, anti-authority, pushing the edge of the envelope sort of stuff, well, this is Nanny Book, with a HUGE hypocritical bending over and holding one’s ankles.

When you read this shit about “Community Stanhdars, think Brave New World, 1984 and Digital Gulag all wrapped into one ugly aggregator of vileness.

Again, revolution will not happen on Facebook, and real change will never be facilitated by using this shit hole space.

That’s about all the energy I have now to discuss this. Look at the shit below. Hypocrisy, gatekeeping, anti-democratic, certainly not tied to any real First Amendment rights.

Fuck You Book has been a very ugly experience for me, and you betcha, getting banned for 24 hours or whatever is pathetic. Alas, they have some shit-hole tool to protest the ban, but that isn’t working now, so the shit-hole that is the F/Zuckerberg stays the shit-hole he and all his Little Eichmann’s in his stable wallow in.

This shit below is written by his stable of lawyers, some I am sure being his closest buddies from bar mitzvah land. Oh yeah, somehow that is hate speech, bringing up bat mitzvah’s and bar mitzvah’s.

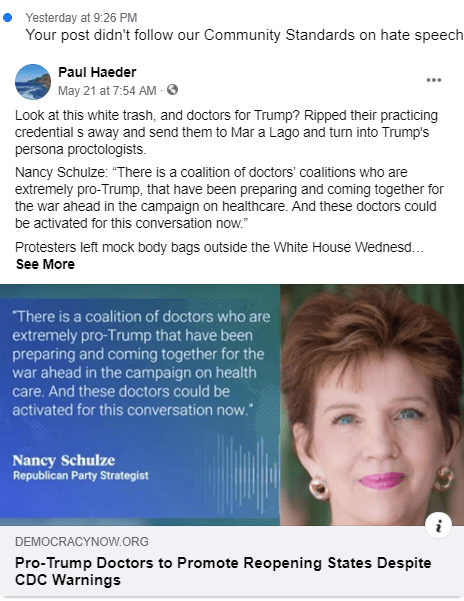

This is why I am banned:

From fucking Democracy Now! Banning Amy Goodman now?

INTRODUCTION

Every day, people use Facebook to share their experiences, connect with friends and family, and build communities. We are a service for more than two billion people to freely express themselves across countries and cultures and in dozens of languages.

We recognize how important it is for Facebook to be a place where people feel empowered to communicate, and we take seriously our role in keeping abuse off our service. That’s why we’ve developed a set of Community Standards that outline what is and is not allowed on Facebook. Our policies are based on feedback from our community and the advice of experts in fields such as technology, public safety and human rights. To ensure that everyone’s voice is valued, we take great care to craft policies that are inclusive of different views and beliefs, in particular those of people and communities that might otherwise be overlooked or marginalized.

REITERATING OUR COMMITMENT TO VOICE

The goal of our Community Standards has always been to create a place for expression and give people a voice. This has not and will not change. Building community and bringing the world closer together depends on people’s ability to share diverse views, experiences, ideas and information. We want people to be able to talk openly about the issues that matter to them, even if some may disagree or find them objectionable. In some cases, we allow content which would otherwise go against our Community Standards – if it is newsworthy and in the public interest. We do this only after weighing the public interest value against the risk of harm and we look to international human rights standards to make these judgments.

Our commitment to expression is paramount, but we recognize the internet creates new and increased opportunities for abuse. For these reasons, when we limit expression, we do it in service of one or more the following values:

Authenticity: We want to make sure the content people are seeing on Facebook is authentic. We believe that authenticity creates a better environment for sharing, and that’s why we don’t want people using Facebook to misrepresent who they are or what they’re doing.

Safety: We are committed to making Facebook a safe place. Expression that threatens people has the potential to intimidate, exclude or silence others and isn’t allowed on Facebook.

Privacy: We are committed to protecting personal privacy and information. Privacy gives people the freedom to be themselves, and to choose how and when to share on Facebook and to connect more easily.

Dignity: We believe that all people are equal in dignity and rights. We expect that people will respect the dignity of others and not harass or degrade others.

Our Community Standards apply to everyone, all around the world, and to all types of content. They’re designed to be comprehensive – for example, content that might not be considered hateful may still be removed for violating a different policy. We recognize that words mean different things or affect people differently depending on their local community, language, or background. We work hard to account for these nuances while also applying our policies consistently and fairly to people and their expression. In the case of certain policies, we require more information and/or context to enforce in line with our Community Standards.

People can report potentially violating content, including Pages, Groups, Profiles, individual content, and comments. We also give people control over their own experience by allowing them to block, unfollow or hide people and posts.

The consequences for violating our Community Standards vary depending on the severity of the violation and the person’s history on the platform. For instance, we may warn someone for a first violation, but if they continue to violate our policies, we may restrict their ability to post on Facebook or disable their profile. We also may notify law enforcement when we believe there is a genuine risk of physical harm or a direct threat to public safety.

Our Community Standards are a guide for what is and isn’t allowed on Facebook. It is in this spirit that we ask members of the Facebook community to follow these guidelines.

Please note that the English version of the Community Standards reflects the most up to date set of the policies and should be used as the master document.

Violence and Criminal Behavior1. Violence and Incitement

We aim to prevent potential offline harm that may be related to content on Facebook. While we understand that people commonly express disdain or disagreement by threatening or calling for violence in non-serious ways, we remove language that incites or facilitates serious violence. We remove content, disable accounts, and work with law enforcement when we believe there is a genuine risk of physical harm or direct threats to public safety. We also try to consider the language and context in order to distinguish casual statements from content that constitutes a credible threat to public or personal safety. In determining whether a threat is credible, we may also consider additional information like a person’s public visibility and the risks to their physical safety.

In some cases, we see aspirational or conditional threats directed at terrorists and other violent actors (e.g. Terrorists deserve to be killed), and we deem those non credible absent specific evidence to the contrary.READ MORE2. Dangerous Individuals and OrganizationsIn an effort to prevent and disrupt real-world harm, we do not allow any organizations or individuals that proclaim a violent mission or are engaged in violence to have a presence on Facebook. This includes organizations or individuals involved in the following:

We also remove content that expresses support or praise for groups, leaders, or individuals involved in these activities. Learn more about our work to fight terrorism online here.READ MORE3. Coordinating Harm and Publicizing CrimeIn an effort to prevent and disrupt offline harm and copycat behavior, we prohibit people from facilitating, organizing, promoting, or admitting to certain criminal or harmful activities targeted at people, businesses, property or animals. We allow people to debate and advocate for the legality of criminal and harmful activities, as well as draw attention to harmful or criminal activity that they may witness or experience as long as they do not advocate for or coordinate harm.READ MORE4. Regulated GoodsTo encourage safety and compliance with common legal restrictions, we prohibit attempts by individuals, manufacturers, and retailers to purchase, sell, or trade non-medical drugs, pharmaceutical drugs, and marijuana. We also prohibit the purchase, sale, gifting, exchange, and transfer of firearms, including firearm parts or ammunition, between private individuals on Facebook. Some of these items are not regulated everywhere; however, because of the borderless nature of our community, we try to enforce our policies as consistently as possible. Firearm stores and online retailers may promote items available for sale off of our services as long as those retailers comply with all applicable laws and regulations. We allow discussions about sales of firearms and firearm parts in stores or by online retailers and advocating for changes to firearm regulation. Regulated goods that are not prohibited by our Community Standards may be subject to our more stringent Commerce Policies.READ MORE5. Fraud and DeceptionIn an effort to prevent and disrupt harmful or fraudulent activity, we remove content aimed at deliberately deceiving people to gain an unfair advantage or deprive another of money, property, or legal right. However, we allow people to raise awareness and educate others as well as condemn these activities using our platform.READ MORE

Safety6. Suicide and Self-InjuryIn an effort to promote a safe environment on Facebook, we remove content that encourages suicide or self-injury, including certain graphic imagery and real-time depictions that experts tell us might lead others to engage in similar behavior. Self-injury is defined as the intentional and direct injuring of the body, including self-mutilation and eating disorders. We want Facebook to be a space where people can share their experiences, raise awareness about these issues, and seek support from one another, which is why we allow people to discuss suicide and self-injury.

We work with organizations around the world to provide assistance to people in distress. We also talk to experts in suicide and self-injury to help inform our policies and enforcement. For example, we have been advised by experts that we should not remove live videos of self-injury while there is an opportunity for loved ones and authorities to provide help or resources.

In contrast, we remove any content that identifies and negatively targets victims or survivors of self-injury or suicide seriously, humorously, or rhetorically.

Learn more about our suicide and self-injury policies and the resources that we provide.READ MORE7. Child Nudity and Sexual Exploitation of ChildrenWe do not allow content that sexually exploits or endangers children. When we become aware of apparent child exploitation, we report it to the National Center for Missing and Exploited Children (NCMEC), in compliance with applicable law. We know that sometimes people share nude images of their own children with good intentions; however, we generally remove these images because of the potential for abuse by others and to help avoid the possibility of other people reusing or misappropriating the images.

We also work with external experts, including the Facebook Safety Advisory Board, to discuss and improve our policies and enforcement around online safety issues, especially with regard to children. Learn more about the new technology we’re using to fight against child exploitation.READ MORE8. Sexual Exploitation of AdultsWe recognize the importance of Facebook as a place to discuss and draw attention to sexual violence and exploitation. We believe this is an important part of building common understanding and community. In an effort to create space for this conversation while promoting a safe environment, we remove content that depicts, threatens or promotes sexual violence, sexual assault, or sexual exploitation, while also allowing space for victims to share their experiences. We remove content that displays, advocates for, or coordinates sexual acts with non-consenting parties or commercial sexual services, such as prostitution and escort services. We do this to avoid facilitating transactions that may involve trafficking, coercion, and non-consensual sexual acts.

To protect victims and survivors, we also remove images that depict incidents of sexual violence and intimate images shared without permission from the people pictured. We’ve written about the technology we use to protect against intimate images and the research that has informed our work. We’ve also put together a guide to reporting and removing intimate images shared without your consent.READ MORE9. Bullying and Harassment

Bullying and harassment happen in many places and come in many different forms, from making threats to releasing personally identifiable information, to sending threatening messages, and making unwanted malicious contact. We do not tolerate this kind of behavior because it prevents people from feeling safe and respected on Facebook.

We distinguish between public figures and private individuals because we want to allow discussion, which often includes critical commentary of people who are featured in the news or who have a large public audience. For public figures, we remove attacks that are severe as well as certain attacks where the public figure is directly tagged in the post or comment. For private individuals, our protection goes further: we remove content that’s meant to degrade or shame, including, for example, claims about someone’s sexual activity. We recognize that bullying and harassment can have more of an emotional impact on minors, which is why our policies provide heightened protection for users between the ages of 13 and 18.

Context and intent matter, and we allow people to share and re-share posts if it is clear that something was shared in order to condemn or draw attention to bullying and harassment. In certain instances, we require self-reporting because it helps us understand that the person targeted feels bullied or harassed. In addition to reporting such behavior and content, we encourage people to use tools available on Facebook to help protect against it.

We also have a Bullying Prevention Hub, which is a resource for teens, parents, and educators seeking support for issues related to bullying and other conflicts. It offers step-by-step guidance, including information on how to start important conversations about bullying. Learn more about what we’re doing to protect people from bullying and harassment here.READ MORE10. Human Exploitation

After consulting with outside experts from around the world, we are consolidating several existing exploitation policies that were previously housed in different sections of the Community Standards into one dedicated section that focuses on human exploitation and captures a broad range of harmful activities that may manifest on our platform. Experts think and talk about these issues under one umbrella — human exploitation.

In an effort to disrupt and prevent harm, we remove content that facilitates or coordinates the exploitation of humans, including human trafficking. We define human trafficking as the business of depriving someone of liberty for profit. It is the exploitation of humans in order to force them to engagein commercial sex, labor, or other activities against their will. It relies on deception, force and coercion, and degrades humans by depriving them of their freedom while economically or materially benefiting others.

Human trafficking is multi-faceted and global; it can affect anyone regardless of age, socioeconomic background, ethnicity, gender, or location. It takes many forms, and any given trafficking situation can involve various stages of development. By the coercive nature of this abuse, victims cannot consent.

While we need to be careful not to conflate human trafficking and smuggling, the two can be related and exhibit overlap. The United Nations defines human smuggling as the procurement or facilitation of illegal entry into a state across international borders. Without necessity for coercion or force, it may still result in the exploitation of vulnerable individuals who are trying to leave their country of origin, often in pursuit of a better life. Human smuggling is a crime against a state, relying on movement, and human trafficking is a crime against a person, relying on exploitation.READ MORE11. Privacy Violations and Image Privacy RightsPrivacy and the protection of personal information are fundamentally important values for Facebook. We work hard to keep your account secure and safeguard your personal information in order to protect you from potential physical or financial harm. You should not post personal or confidential information about others without first getting their consent. We also provide people ways to report imagery that they believe to be in violation of their privacy rights.READ MORE

12. Hate SpeechWe do not allow hate speech on Facebook because it creates an environment of intimidation and exclusion and in some cases may promote real-world violence.

We define hate speech as a direct attack on people based on what we call protected characteristics — race, ethnicity, national origin, religious affiliation, sexual orientation, caste, sex, gender, gender identity, and serious disease or disability. We protect against attacks on the basis of age when age is paired with another protected characteristic, and also provide certain protections for immigration status. We define attack as violent or dehumanizing speech, statements of inferiority, or calls for exclusion or segregation. We separate attacks into three tiers of severity, as described below.

Sometimes people share content containing someone else’s hate speech for the purpose of raising awareness or educating others. In some cases, words or terms that might otherwise violate our standards are used self-referentially or in an empowering way. People sometimes express contempt in the context of a romantic break-up. Other times, they use gender-exclusive language to control membership in a health or positive support group, such as a breastfeeding group for women only. In all of these cases, we allow the content but expect people to clearly indicate their intent, which helps us better understand why they shared it. Where the intention is unclear, we may remove the content.

We allow humor and social commentary related to these topics. In addition, we believe that people are more responsible when they share this kind of commentary using their authentic identity.

Click here to read our Hard Questions Blog and learn more about our approach to hate speech.READ MORE13. Violent and Graphic ContentWe remove content that glorifies violence or celebrates the suffering or humiliation of others because it may create an environment that discourages participation. We allow graphic content (with some limitations) to help people raise awareness about issues. We know that people value the ability to discuss important issues like human rights abuses or acts of terrorism. We also know that people have different sensitivities with regard to graphic and violent content. For that reason, we add a warning label to especially graphic or violent content so that it is not available to people under the age of eighteen and so that people are aware of the graphic or violent nature before they click to see it.READ MORE14. Adult Nudity and Sexual ActivityWe restrict the display of nudity or sexual activity because some people in our community may be sensitive to this type of content. Additionally, we default to removing sexual imagery to prevent the sharing of non-consensual or underage content. Restrictions on the display of sexual activity also apply to digitally created content unless it is posted for educational, humorous, or satirical purposes.

Our nudity policies have become more nuanced over time. We understand that nudity can be shared for a variety of reasons, including as a form of protest, to raise awareness about a cause, or for educational or medical reasons. Where such intent is clear, we make allowances for the content. For example, while we restrict some images of female breasts that include the nipple, we allow other images, including those depicting acts of protest, women actively engaged in breast-feeding, and photos of post-mastectomy scarring. We also allow photographs of paintings, sculptures, and other art that depicts nude figures.READ MORE15. Sexual SolicitationAs noted in Section 8 of our Community Standards (Sexual Exploitation of Adults), people use Facebook to discuss and draw attention to sexual violence and exploitation. We recognize the importance of and want to allow for this discussion. We draw the line, however, when content facilitates, encourages or coordinates sexual encounters between adults. We also restrict sexually explicit language that may lead to solicitation because some audiences within our global community may be sensitive to this type of content and it may impede the ability for people to connect with their friends and the broader community.READ MORE16. Cruel and InsensitiveWe believe that people share and connect more freely when they do not feel targeted based on their vulnerabilities. As such, we have higher expectations for content that we call cruel and insensitive, which we define as content that targets victims of serious physical or emotional harm.

We remove explicit attempts to mock victims and mark as cruel implicit attempts, many of which take the form of memes and GIFs.READ MORE

17. MisrepresentationAuthenticity is the cornerstone of our community. We believe that people are more accountable for their statements and actions when they use their authentic identities. That’s why we require people to connect on Facebook using the name they go by in everyday life. Our authenticity policies are intended to create a safe environment where people can trust and hold one another accountable.READ MORE18. SpamWe work hard to limit the spread of spam because we do not want to allow content that is designed to deceive, or that attempts to mislead users to increase viewership. This content creates a negative user experience and detracts from people’s ability to engage authentically in online communities. We also aim to prevent people from abusing our platform, products, or features to artificially increase viewership or distribute content en masse for commercial gain.READ MORE19. CybersecurityWe recognize that the safety of our users extends to the security of their personal information. Attempts to gather sensitive personal information by deceptive or invasive methods are harmful to the authentic, open, and safe atmosphere that we want to foster. Therefore, we do not allow attempts to gather sensitive user information through the abuse of our platform and products.READ MORE20. Inauthentic BehaviorIn line with our commitment to authenticity, we don’t allow people to misrepresent themselves on Facebook, use fake accounts, artificially boost the popularity of content, or engage in behaviors designed to enable other violations under our Community Standards. This policy is intended to create a space where people can trust the people and communities they interact with.READ MORE21. False NewsReducing the spread of false news on Facebook is a responsibility that we take seriously. We also recognize that this is a challenging and sensitive issue. We want to help people stay informed without stifling productive public discourse. There is also a fine line between false news and satire or opinion. For these reasons, we don’t remove false news from Facebook but instead, significantly reduce its distribution by showing it lower in the News Feed. Learn more about our work to reduce the spread of false news here.READ MORE22. Manipulated MediaMedia, including image, audio, or video, can be edited in a variety of ways. In many cases, these changes are benign, like a filter effect on a photo. In other cases, the manipulation isn’t apparent and could mislead, particularly in the case of video content. We aim to remove this category of manipulated media when the criteria laid out below have been met.

In addition, we will continue to invest in partnerships (including with journalists, academics and independent fact-checkers) to help us reduce the distribution of false news and misinformation, as well as to better inform people about the content they encounter online.READ MORE23. MemorializationWhen someone passes away, friends and family can request that we memorialize the Facebook account. Once memorialized, the word “Remembering” appears above the name on the person’s profile to help make it that the account is now a memorial site and protects against attempted logins and fraudulent activity. To respect the choices someone made while alive, we aim to preserve their account after they pass away. We have also made it possible for people to identify a legacy contact to look after their account after they pass away. To support the bereaved, in some instances we may remove or change certain content when the legacy contact or family members request it.

Visit Hard Questions for more information about our memorialization policy and process. And see our Newsroom for the latest tools we’re building to support people during these difficult times.READ MORE

24. Intellectual PropertyFacebook takes intellectual property rights seriously and believes they are important to promoting expression, creativity, and innovation in our community. You own all of the content and information you post on Facebook, and you control how it is shared through your privacy and application settings. However, before sharing content on Facebook, please be sure you have the right to do so. We ask that you respect other people’s copyrights, trademarks, and other legal rights. We are committed to helping people and organizations promote and protect their intellectual property rights. Facebook’s Terms of Service do not allow people to post content that violates someone else’s intellectual property rights, including copyright and trademark. We publish information about the intellectual property reports we receive in our bi-annual Transparency Report, which can be accessed at https://transparency.facebook.com/READ MORE

25. User RequestsWe comply with:

We comply with:

We comply with:

User requests for removal of their own account

Requests for removal of a deceased user’s account from a verified immediate family member or executor

Requests for removal of an incapacitated user’s account from an authorized representative

READ MORE26. Additional Protection of MinorsWe comply with:

Requests for removal of an underage account

Government requests for removal of child abuse imagery depicting, for example, beating by an adult or strangling or suffocating by an adult

Legal guardian requests for removal of attacks on unintentionally famous minors

Stakeholder Engagement

Gathering input from our stakeholders is an important part of how we develop Facebook’s Community Standards. We want our policies to be based on feedback from community representatives and a broad spectrum of the people who use our service, and we want to learn from and incorporate the advice of experts.

Engagement makes our Community Standards stronger and more inclusive. It brings our stakeholders more fully into the policy development process, introduces us to new perspectives, allows us to share our thinking on policy options, and roots our policies in sources of knowledge and experience that go beyond Facebook.

Product Policy is the team that writes the rules for what people are allowed to share on Facebook, including the Community Standards. To open up the policy development process and gather outside views on our policies, we created the Stakeholder Engagement team, a sub-team that’s part of Product Policy. Stakeholder Engagement’s main goal is to ensure that our policy development process is informed by the views of outside experts and the people who use Facebook. We have developed specific practices and a structure for engagement in the context of the Community Standards, and we’re expanding our work to cover additional policies, particularly ads policies and major News Feed ranking changes.

In this post, we provide an overview of how stakeholder engagement contributes to the Community Standards. While Facebook is of course responsible for the substance of its policies, engagement helps us improve those policies and deepen important stakeholder relationships in ways we’ll explain. To benchmark our work and help us incorporate best practices in this area, we retained non-profit organization BSR (Business for Social Responsibility). BSR conducted an analysis that has informed our perspective on engagement, and that we’ve used in preparing this post.

WHO ARE OUR STAKEHOLDERS?

By “stakeholders” we mean all organizations and individuals who are impacted by, and therefore have a stake in, Facebook’s Community Standards. Because the Community Standards apply to every post, photo, and video shared on Facebook, this means that our more than 2.7 billion users are, in a broad sense, stakeholders.

But we can’t meaningfully engage with that many people. So it’s also useful to think of stakeholders as those who are informed about and able to speak on behalf of others. This is why the primary focus of our engagement is civil society organizations, activist groups, and thought leaders, in such areas as digital and civil rights, anti-discrimination, free speech, and human rights. We also engage with academics who have relevant expertise. Academics may not directly represent the interests of others, but they are important stakeholders by virtue of their extensive knowledge, which helps us create better policies for everyone.

HOW DO WE INCORPORATE STAKEHOLDER ENGAGEMENT INTO OUR POLICY DEVELOPMENT PROCESS?

Integrating stakeholder feedback into the policy-making process is a core part of how we work. Though it’s important that we not over-promise, we know that what stakeholders seek above all is for their insights to inform our policy decisions.

There are many reasons why we may draft a new policy or revise an existing one. We continuously build our policies to meet the needs of our community. Sometimes external stakeholders tell us that a particular policy fails to address an issue that’s important to them. In other cases, the press draws attention to a policy gap. Often, members of Facebook’s Community Operations team (whose employees, contractors, and out-sourcing partners are responsible for enforcing the Community Standards) tell us about trends or the need for policy clarification. And Facebook’s Research teams (both within Product Policy and in other parts of the company) may point us to data or user sentiment that seems best addressed through policy-making.

In considering a new policy, Product Policy runs a series of internal working groups, leading up to a cross-team meeting we call the Product Policy Forum (previously referred to as the Content Standards Forum). We’ve explained the Content Standards Forum in detail here.

At the outset, the Stakeholder Engagement team frames up policy questions requiring feedback and determines what types of stakeholders to prioritize for engagement. We then reach out to external stakeholders, gathering feedback that we document and synthesize for our colleagues.

Our engagement on the Community Standards takes many forms. The heart of our approach to engagement is private conversations, most often in person or by video-conference. We’ve found that this approach lends itself to candid dialogue and relationship-building. We typically don’t release the names of those we engage with because conversations can be sensitive and we want to ensure open lines of communication. Some stakeholders may also request or require confidentiality, particularly if media attention is unwanted or if they are members of a vulnerable community.

In addition, we sometimes convene group discussions, bringing together stakeholders in particular regions or specific policy areas. We’ve found the group setting to be useful for generating ideas and providing updates to multiple stakeholders. And on occasion it also makes sense to reach out to relevant Facebook users to get their views. Recently, for example, we reviewed the “exclusion” element of our hate speech policy. As part of this process, we talked to the admins from a number of major Facebook Groups (admins are responsible for managing Group settings), who shared their insights with us relating to this policy. We’ll do more of this user outreach in the future.

In our conversations with external stakeholders, we share Facebook’s thinking on the proposed policy change, including what led us to reconsider this policy, and the pros and cons of policy options we’ve identified. The feedback we receive is fed into the process and shapes our ongoing deliberations by highlighting new perspectives and helping us evaluate our options. When stakeholder views conflict, we analyze the spectrum of opinion and points of disagreement. We want to identify which views are most persuasive and instructive for us, but we’re not necessarily trying to reconcile them; rather, our goal is to understand the full range of opinion concerning the proposal. In some cases we return to stakeholders for additional input as our thinking develops.

At the Product Policy Forum, the Stakeholder Engagement team presents a detailed summary of the feedback we’ve received on each policy proposal, and we lay out the views of our stakeholders on a spectrum of policy options. This summary is made public (minus the names of individual stakeholders and organizations) in our published minutes of the meeting. In this way, anyone can see the range and nature of engagement we’ve conducted for policy proposals, and the rationale for our final decision. After policy development is complete, we inform our stakeholders what we’ve decided.

WHAT PRINCIPLES GUIDE OUR ENGAGEMENT?

A commitment to stakeholder engagement means addressing a number of essential questions — such as, how do we decide which groups and individuals to talk to, how do we make sure that vulnerable groups are heard, and how do we find relevant experts?

Our policies involve a complex balancing of values such as safety, voice, and equity. There’s no simple formula for how engagement contributes to this work. But over the past year we’ve developed a structure and methodology for engagement on our Community Standards, built around three core principles:

Inclusiveness

Engagement broadens our perspective and creates a more inclusive approach to policy-making.

Engagement helps us better understand how our policies impact those who use our service. When we make decisions about what content to remove and what to leave up, we affect people’s speech and the way they connect on Facebook. Not everyone will agree on where we draw the lines, but at a minimum, we need to understand the concerns of those who are affected by our policies. This is particularly important for stakeholders whose voices have been marginalized.

Understanding how our policies affect the people who use Facebook presents a major challenge. Our scale makes it impossible to speak directly to the more than 2.7 billion people who use our platform. This dilemma also underscores the importance of reaching out to a broad spectrum of stakeholders in all regions so that our policy-making process is globally diverse.

The question of our impact plays out in many ways. Our policies are global (the Internet is borderless, and our mission is to build community), but we touch people’s lives on a very local level. We are often asked, “Why should you be creating policies to govern online speech for me?” Typically embedded in this question is a demand that we show more cultural sensitivity and understanding of regional context.

Stakeholder engagement gives us a tool to deepen our local knowledge and perspective – so we can hear voices we might otherwise miss. For each policy proposal, we identify a global and diverse set of stakeholders on the issue. We seek voices across the policy spectrum, but it’s not always self-evident what the spectrum is. In many cases, our policies don’t line up neatly with traditional dichotomies, such as liberal versus conservative, or civil libertarian versus law enforcement. We talk to others in Facebook’s Policy and Research organizations and conduct our own research to identify a range of diverse stakeholders.

For example, in considering how our hate speech policy should apply to certain forms of gendered language, we spoke with academic experts, women’s and digital rights groups, and free speech advocates. Likewise, when considering our policy on nudity and sexual activity in art, we listened to family safety organizations, artists, and museum curators. In reviewing how our policies should apply to memorialized profiles of deceased users, we connected both with professors who study digital legacy as an academic subject and Facebook users who’ve been designated as “Legacy Contacts” and who have real world experience with this product feature.

It’s not enough to ask how our policies affect users in general. We need to understand how our policies will impact people who are particularly vulnerable by virtue of laws, cultural practices, poverty, or other reasons that prevent them from speaking up for their rights. In our stakeholder mapping, we seek to put an emphasis on minority groups that have traditionally lacked power, such as political dissidents and religious minorities throughout the world. In reevaluating how our hate speech policy applies to certain behavioral generalizations, for example, we consulted with immigrants rights groups. Our efforts are a work in progress, but we are committed to bringing these voices into our policy discussions.

Expertise

Engagement brings expertise to our policy development process.

The Stakeholder Engagement team conducts detailed, iterative research to identify top subject matter experts in civil society and academia. It then gathers their views to inform our policy decisions.

This engagement ensures that our policy-making process is informed by current theories and analysis, empirical research, and an understanding of the latest online trends. The expertise we gather includes issues of language, social identity, and geography, all of which bear on our policies in important ways.

Facebook’s policies are entwined with many complex social and technological issues, such as hate speech, terrorism, bullying and harassment, and threats of violence. Sometimes we’re looking for guidance on how safety and voice should be balanced — for example, in considering what types of speech to allow about “public figures” under our policies. In other cases, we’re reaching out to gain specialized knowledge, such as how our policies can draw on international human rights principles, or how minority communities may experience certain types of speech.

We don’t have all the answers to address these problems on our platform. Sometimes the challenges we face are novel even to the experts we consult with. But by talking to outside experts and incorporating their feedback, we make our policies more thoughtful.

For example, over the past year and a half we’ve modified our hate speech policy to recognize three tiers of attacks. Tier 1, the most severe, involves calls to violence or dehumanizing speech against other people based on their race, ethnicity, nationality, gender or other protected characteristic (“Kill the Christians”). Tier 2 attacks consist of statements of inferiority or expressions of contempt or disgust (“Mexicans are lazy”). And Tier 3 covers calls to exclude or segregate (“No women allowed”).

Developing these tiers has made our policies more nuanced and precise. On the basis of the tiers, we’re able to provide additional protections against the most harmful forms of speech. For instance, we now remove Tier 1 hate speech directed against immigrants (“immigrants are rats”) but permit less intense forms of speech (“immigrants should stay out of our country”) to leave room for broad political discourse.

As part of our work we spoke to outside experts — academics, NGOs that study hate speech, and groups all across the political landscape. This engagement helped confirm that the tiers were comprehensive and aligned with patterns of online and offline behavior. We’ll continue to consider adjustments to our policies in light of opinions from experts and civil society.

Transparency

Engagement makes our policies and our policy development process more transparent.

Given the impact of our Community Standards on society, it’s critical for us to create a policy development process that’s not only inclusive and based on expert knowledge, but also transparent. We know from talking to hundreds of stakeholders that opening up our policy-making process helps build trust. The more visibility we provide, the more our stakeholders are likely to view the Community Standards as relevant, legitimate, and based on consent.

When we engage, we share details about the challenges of moderating content for 2.7 billion people and we explain the rationale behind our policies and why there may be a need for improvement. We gather stakeholder feedback so we can develop creative policy solutions to these problems. The policies we launch based on this process are still owned by Facebook, but they are stronger by virtue of having been tested through consultation and an exchange of views.

It’s important to acknowledge that our policies will never make everyone happy. Nudity, say, is viewed quite differently in Scandinavia and Southeast Asia, and no Facebook policy on nudity would be equally satisfactory to both. Our job as a team is to craft thoughtful global policies, knowing that our work will be criticized by some.

Transparency on our process of engagement also helps us build a system of rules and enforcement that people regard as fair. We know that some people would like us to go further and disclose the names of our stakeholders and even the substance of our discussions with them. For reasons discussed above, we’ve chosen not to go this route, at least for now. We’ll continue to experiment with ways of being more public about engagement where we have the prior agreement of our stakeholders.

THE WAY FORWARD

As the breadth and specialization of our policies increase, so too will the scope of our engagement. We’ll continue to refine our policy development process and work to realize our stakeholder engagement principles of inclusiveness, expertise, and transparency. We expect our team to grow, and our reach to expand.

With BSR’s help, we’re also working on specific ways to improve.

For example, we’ll continue to develop means to engage with new stakeholders around the world and will seek guidance from regional experts about how to do so most effectively. We’ve also had requests to explore channels like informal roundtables and recurring video-conference meetings as a way of staying in touch with stakeholders on specific policy issues. These settings provide continuity and enable us to involve stakeholders even more closely in the design of specific policies and products.

As we expand the scope of our outreach, we also want to investigate whether we might be able to create other mechanisms for users to give us feedback on our policies. For example, as part of the effort to gather global feedback on the Oversight Board, Facebook created a public consultation process containing both a questionnaire and free-form questions. Through this tool, users were able to submit their views on the Board directly to Facebook. One could imagine a similar process whereby NGOs and civil society organizations could join our network of contacts in order to receive regular policy updates and provide feedback to members of our team.

These are all exciting challenges, and we look forward to working with our stakeholders to improve the level of our engagement and its contribution to the development of our policies.